I was reading Resident Contrarian’s recent blog post when a throwaway line at the beginning caught my attention, prompting a longer train of thought:

The most troublesome arguments to try to make are those that are both very specific and limited (and are by nature of that more or less non-controversial) …

I’ll need to re-read his post multiple times to understand his actual argument — I find that often true of him, and the rationalist community (even though he hates them). Until then, I want to address just these lines, and the sentiment behind them, which frustrates me in many aspects of my life.

Yes, specific and limited arguments are less controversial, but they’re not less interesting. I firmly believe being specific and narrow in your line of questioning or argument (or even interests) is a good thing. It usually doesn’t carry the baggage that RC is afraid of — or if it does, just state that you don’t want to generalize outright and move on.

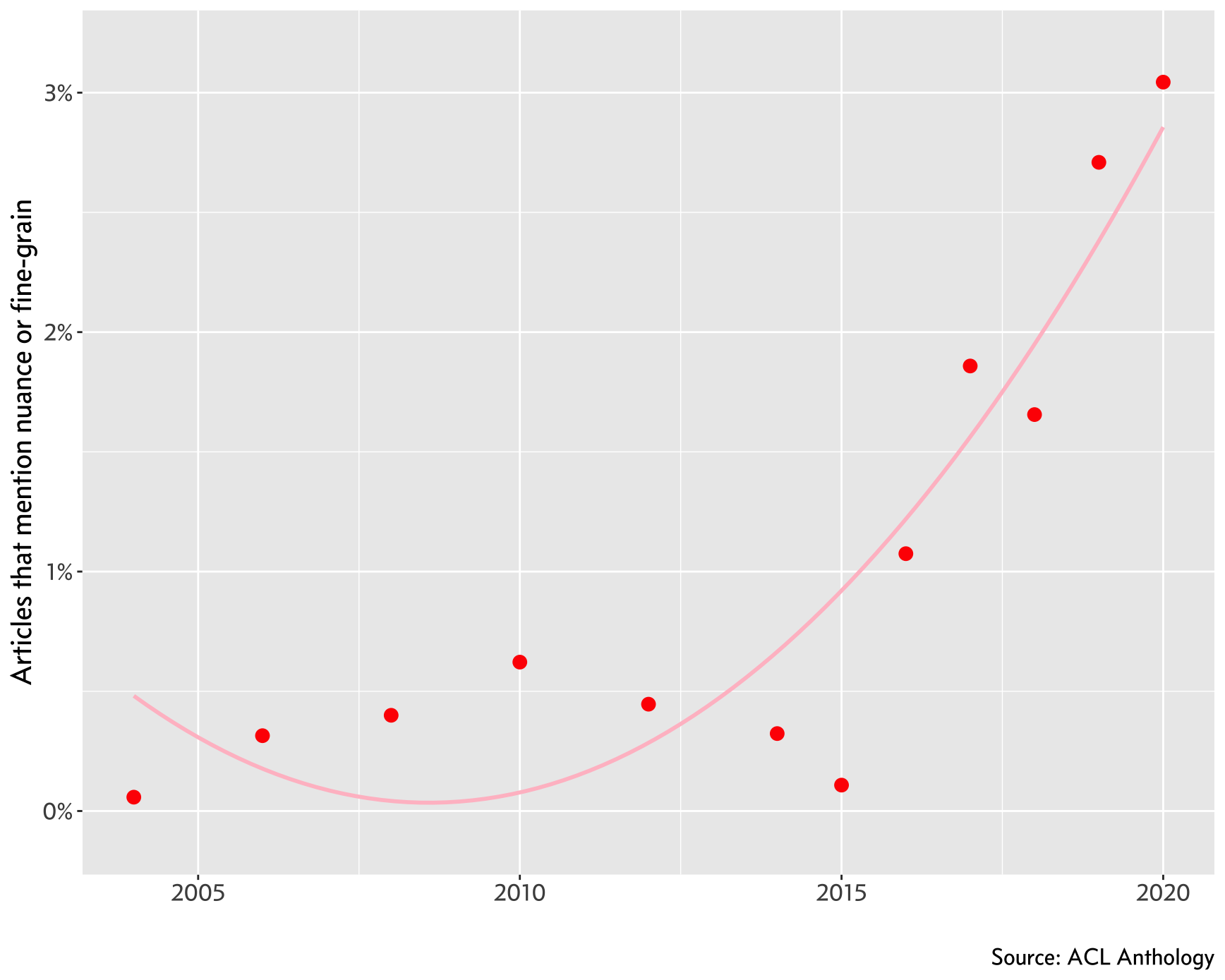

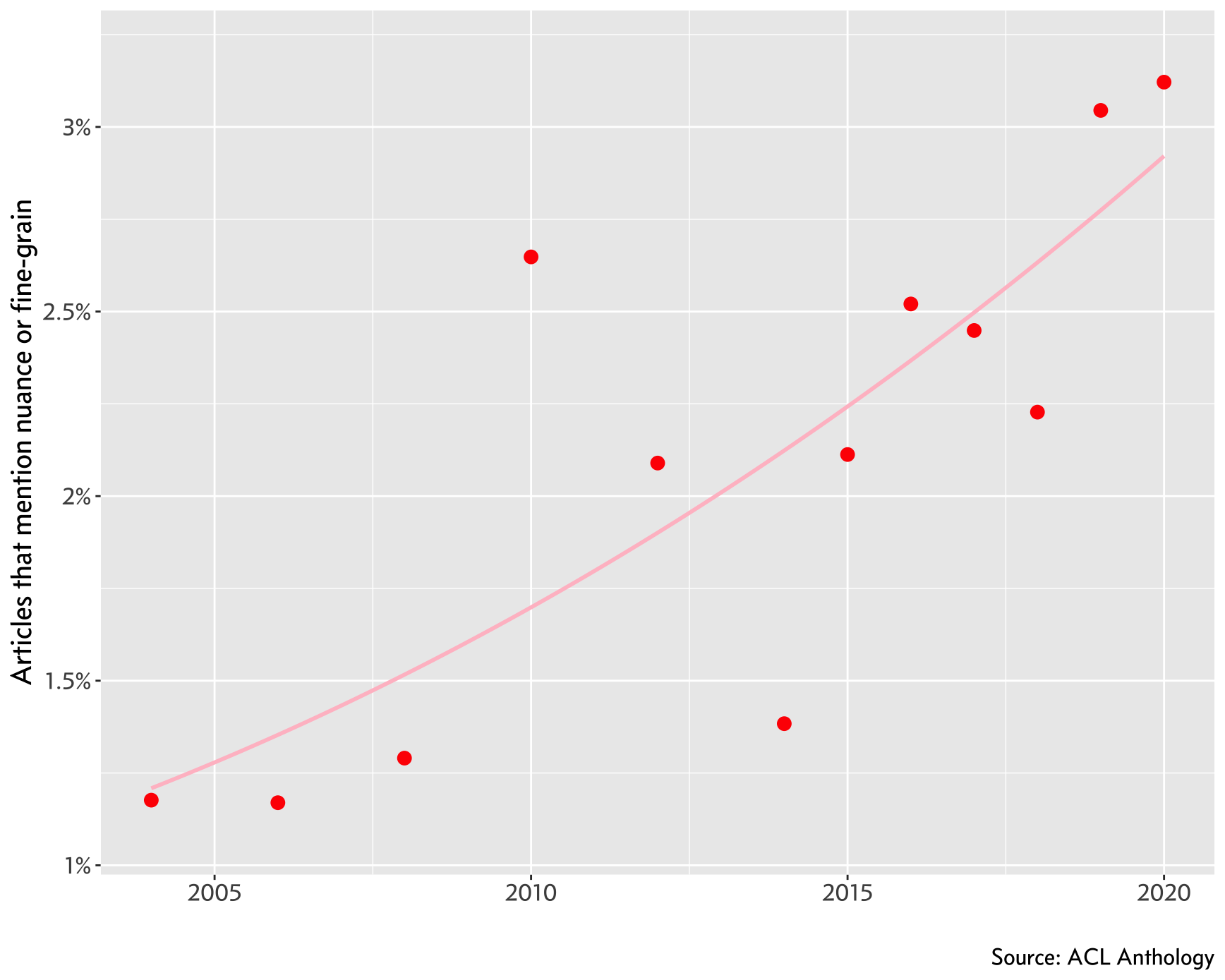

I see this with research all the time; work on a niche subject or topic needs to be generalizable beyond the domain of analysis, or the authors will have a hard time with reviewers. You can’t just study, for instance, how Eagles fans talk about Cowboys fans online. What about other teams? What if its not online? What if its not sports? I get that a research question is always grounded in past and contemporary work, and we need to be cognizant and report that, but I think we’re overdoing it in Computational Linguistics and NLP right now. Anything and everything you do has to be generalizable, or fit into the existing work perfectly.

Not that this is a unique problem with research in my area, or research in general. Arguments online and in-person get derailed because we forget the specific circumstance or incident — it’s so much easier to generalize without actively wondering if it makes sense to generalize. I do this too, and am trying hard everyday to counter this (I suspect) deep-rooted tendency in my mind to find patterns, over-generalize, or at worst, stereotype.

I’m not happy with this post as it stands; I feel like I’m jumping between the specificity of arguments, questions, beliefs, and thoughts. That’s fine — I’ll always be thinking about this, and writing my thoughts down clarifies them for me. Maybe in a few months, I’ll revisit this topic. But today, I do want to mention and link to two comments that resonated with me, and possibly catalyzed my thinking on this topic. First, from Christopher Hitchens in this video (starting from 18 seconds in), on how to ask a question:

Here’s a piece of advice about asking a question. Try and narrow it down a bit… if you give me too much to chose from, you’re likely to get everything or nothing, and I certainly can’t give you everything…

Also, here’s Nikki Giovanni, starting at 21:10, on being parochial in her activism (the entire interview is amazing):

… because I tend to be parochial, for one thing, and I tend to care about Afro-Americans … It’s very parochial because I don’t care about my third world brothers and sisters … If I deal with my block, and you do with your block, we’ll have two good blocks.

Being parochial in one’s passions might seem like a different thing from what I was talking about earlier, but they’re not separate in my mind. I don’t think I’m overgeneralizing here to lump these two together. I think what they’re both saying succinctly describes the value of being narrow, be it in questions, arguments or interests.

]]>